TROUBLESHOOTING

Audio Myths & DAW Wars

Three things you need to know about audio quality

- Settings - There are many traps for young players when comparing audio from two DAWs, there are many settings that can be different, make sure you know what they are (numbered and discussed below).

- Bits & Hz - Research has shown that for music distribution, 16 Bit @ 44.1 kHz (CD standard) is indistinguishable from 24 Bit @ 192 kHz in a sample of over 550 listeners1. In other words, more bits and higher bit-rates are not going to improve the 'quality' of your tracks.

- Skill - The world is full of marketing departments trying to convince you that equipment and specifications can substitute for talent & hard work. This is not true, the 'performance' transcends the medium every time. The performance includes musicianship, vocals, orchestration, arrangement and the mixing decisions. These are all under your control and have little to do with the DAW software you use or plugins you have.

Audio quality, the eternal quest

Spend time on any forum devoted to any Digital Audio Workstation (DAW) software or music production and you are guaranteed to see users making claims about the superior audio quality of this or that DAW application. Protagonists will say a given program is clearly and audibly superior to another. To be frank, that's just nonsense. Any DAW application that uses, at least, 32 Bit floating point calculations (and today, that's all software), will process audio without introducing unwanted distortions, frequency response alterations or any other effect that would be 'clearly audible' so as to influence opinion. This ability to process audio without making unintended, audible changes is called 'transparency'. From a transparency perspective all DAW software is created equal.

Digital Audio Primer

How digital audio really works. Before progressing we strongly recommend you take the time to watch these videos, from start to end. Both cover the same basic information from slightly different perspectives. They outline some indisputable and factual basics about how digital audio works. Everyone working in the audio industry should take some time to understand these first principles.

The psychology of sound

So why do people 'hear' differences in DAW quality, why do they persist and why do you see 'famous' producers proclaiming the audio quality of one DAW application over all others. Surely these guys know what they are talking about. The answer is, as usual, complex.

First, recording engineers and 'famous people' are humans subject to psychological and perceptual limitations (see: placebo effect, Hawthorne effects, Novelty effect etc). When industry professionals compare or assess equipment it's (almost?) never done under controlled and scientifically validated conditions. For example, statistically meaningful numbers of forced choice comparisons under double blind listening conditions, in a controlled experiment. Unless they have done this, their subjective opinions about audio quality should be ignored (yes really). While many industry professionals & musicians may have long and successful careers mixing & producing music, they are still open to making cause-effect misattributions when subjective listening. That's why we say, do the blind listening experiment.

Related to the above, companies send their product/s out free to as many industry and famous people as they can find. This group tries the product and a sub-group think it "sounds great" or "better than anything they have ever heard". You will find these people in any sample. It's this sub-group and their quotes that appear in the marketing blurb for the product/s. Now don't get us wrong, these people really do think the product sounds great, but it's a subjective impression and hardly qualifies as proof that the product is any better than others in the market or even an improvement on what went before.

A second and more important cause is the many settings and options that can affect the live and rendered audio from any DAW. Its unlikely that 'out of the box' any two DAWs will make exactly the same sound. The following list will help you to understand what these settings and options are, and to give you a broader perspective on what really can make a difference and hopefully protect you from the marketing machine:

- Loudness - In a comparison, louder always sounds 'better' than quieter. It's almost guaranteed that the same track rendered from any two DAWs will be a few dB different and so this is the most common cause of the 'DAW wars'. The louder of two otherwise identical sounds will seem to have more bass and clearer high frequencies (as Bob Katz, mastering engineer, describes). This comes from the way our ears work, not anything in the audio itself. So yes, one DAW really did sound better, because it was louder. This is why people are so adamant on the Internet forums, because they really did hear it with their own ears. Compounding the problem, these small differences may not be apparent from casual inspection of the peak metering. "Yeah the levels were the same". No, they probably weren't. Be very careful, level differences of 1-3 dB may not even seem 'louder' but do sound 'clearer', 'bassier' or 'crisper'. As a rule-of-thumb, 1 dB is about the smallest level difference listeners can detect in a mix (0.2~0.5 dB in a laboratory setting). So if you are comparing audio from various sources they need to be matched to within 0.5 dB! Seriously. Everything needs to be the same, especially the audio files you use for comparisons. Apart from basic mix decisions there are a number of reasons why sound rendered from FL Studio may be quieter/louder than another DAW.

- Your mixing decisions - See the section in the manual on Levels and Mixing. This is where the magic happens. If you can mix well, your music will probably sound great no matter what the technical specifications of the DAW are. Mixing is a craft and takes years to learn, just like any musical instrument. So if your mixes sound bad compared to the commercial mixes, this is, with 99.99% probability, the reason why. The DAW doesn't suck, you do. Also be aware that you don't need any more sophisticated tools to mix than a nice Parametric EQ, Compressor/Limiter and the basic Mixer functions. All those 'mastering' plugins are useful tools that can save time, but no substitute for experience and methodical work flow. If you want to get some idea how the actual sound itself can influence you emotionally, load Harmless in a default project and start working your way through the presets. Some sound thin and reedy others will blow your socks off. It's all about the sounds mixed together (the performance), not the technical specs of the DAW. Now imagine how hard it is to separate the performance from the technical aspects of the DAW when it comes to our emotional reaction to a platform. All too often, great mixing, performance or patch programming is mistaken for product design or specifications.

- Live Mixing interpolation - This applies to Sampler Channels when transposing samples from the root note. Plugin instruments may have their own live vs rendered interpolation settings. When sample-rates are converted (pitch shifting a sample for example) then the DAW may need to add sample data between the existing points. Interpolation is about making an accurate prediction as to what that level should be, and so reduce a problem known as quantization error that results in aliasing and/or quantization noise.

- Rendered audio settings - Including WAV bit-depth setting, MP3/OGG bit-rate setting and Sampler interpolation. The wav bit-depth (16, 24 or 32) won't have a noticeable impact on the sound you hear however, the lossy formats (mp3 & ogg) definitely will introduce audible 'garbling' or 'underwater' sounds when used at bit-rates less than about 190 kbps. These formats are really for music distribution, although can sound spectacularly good at bit-rates of 240 kbps and above. Sampler interpolation is the same feature as discussed in the mixer section, but here applies to rendered files. If you are hearing differences between the live and rendered sound, then make sure the live and rendered interpolation settings match.

- Plugins behaving badly - We have a manual page 'Plugins behaving badly' dedicated to that. Some plugins just sound bad or make strange noises when used with the wrong settings. FL studio has a lot of Wrapper settings to give you the widest compatibility with poorly programmed plugins.

- Plugins behaving differently - This trips many people up when they render the same synth from two DAWs and compare the waveforms under a microscope. Synthesizers usually have some randomization and/or free running oscillators (meaning the phase of the waveform will change as a function of the note start time), as the point of most synths is to not produce the 'exact' same waveform twice. Make sure to disable any randomization settings and to send the same notes of the same velocity with any of the same modulation settings. A better strategy here is to use a .wav file as a test source, then you know it's identical to start with in each DAW.

- Marketing has influenced you - Yes it has. Digital audio is just a stream of numbers. Computers add up numbers in a well understood and predictable way, if they didn't we'd constantly have satellites raining down on us from the sky. We are talking here basic

mathematics (addition, subtraction and division), there is no magic, there are no secret things that some DAW manufacturers know that others don't. Dithering and interpolation

are well understood and there are plenty of options in most DAW software to give you control over them. But understand, that the suppliers of professional and consumer audio equipment have strongly vested interests in convincing you that you need to upgrade to the latest and greatest

gear/format, that's how they make money, selling gear based on specifications. Audio quality ceased to be a meaningful differentiator of music production software platforms once they had all moved to 32 Bit float internal processing. The effect of a lifetime's marketing has been so powerful, let's consider three

aspects associated with bit-depth and sample rate:

- DAW Bit depth - Anyone who has used a calculator will know that when you perform mathematical operations you only have so many decimal places and so get rounding errors and these errors accumulate in the least significant decimal place. The same thing happens when processing digital audio. If you have 16 Bit numbers representing your data then these rounding errors can creep into the audible realm, particularly with very quiet passages of music. A 32 Bit floating point format allows mathematical operations to be performed on audio without rounding errors becoming audible. Before you ask, no, 64 Bit is not subjectively better. Yes there are a few exceptional circumstances that can be concocted to create audible artifacts in a 32 Bit floating point format, but the same is true of 64 Bit float and these cases are not worth considering as a driving factor in perception of 'quality'.

It's worth noting that with 32 Bit processing, using the IEEE floating-point standard double extended precision format, it is possible to achieve 80 Bit internal precision where needed. 64 Bit processing is technically a step down from that, but for audio DSP the difference between 64 and 80 Bit audio is not relevant. If you hear some difference between DAW software, it's coming from a setting, effect or option (numbered and discussed below), not from some inherent quality of the 'audio engine'.

NOTE: If you turn up the volume to maximum on a long-decaying sound stored in 16 vs 24 bit, you will probably hear the difference as quantization noise. But this is not how we listen to music. When the normal music starts again you would blow your speakers and your hearing. Set to normal, or even loud listening levels, those noises are not audible in the domestic environment. As you read around the internet forums you will see many people extrapolate 'volume-cranked' listening as evidence the difference between 16 and 24 bit audio files affects the listening experience. Research1 shows it doesn't.

- Electronics - The best analog to digital electronic circuits that are currently possible to implement in commercially available 'professional' audio equipment are equivalent to, at best, 20 Bit. A dynamic range of 120 dB. Yes, all those 24 Bit recordings are actually delivering somewhere between 18 to 20 bits of real-world precision once they have been mangled by the best converters and room-temperature electronics you can buy. What this means is that even a lowly 24 Bit file has outstripped the ability of our electronics to reproduce it, the noise inherent in the electronic components swamps the remaining resolution in a sea of noise. Sample rates, on the other hand can go almost as high as you want (although some amplifiers behave badly when fed ultrasonic signals, so even this can be an issue), but as we have seen in the study above, more than 44.1 kHz is a waste. Time to stop worrying about the technical specifications of DAWs as a driver of 'quality' and concentrate on the other things in this list that matter more, like mixing and performance.

- The weakest link - Human hearing. Surely 24 Bit 192 kHz wav files sound superior to the 16 Bit 44.1 kHz wav files used on CDs? As we have been referencing, the largest and best study conducted to date (see the reference below)1 shows that there is no audible difference between 'high-end' audio formats ~24 Bit @ 192 kHz and 16 Bit 44.1 kHz (CD standard). A user-friendly article discussing the research can be read at the following link: The Emperor's New Sampling Rate.

What they found - From a sample of 554 listeners that included professionals, the general population & young listeners (prized for their high-frequency hearing), those that correctly identified the higher quality audio was 276, or 49.8%. The same number you would get if you just flipped a coin 554 times or asked untrained monkeys to do the task. No sub-group outperformed chance. In summary, 16 Bit 41.1 kHz sound is indistinguishable from ~24 Bit @ 192 kHz for music distribution. Yes, 32 Bit is important for audio processing in a DAW application, but once it comes time to convert that audio into a format useful for distribution and human consumption, you can't meaningfully improve on the CD standard.

- DAW Bit depth - Anyone who has used a calculator will know that when you perform mathematical operations you only have so many decimal places and so get rounding errors and these errors accumulate in the least significant decimal place. The same thing happens when processing digital audio. If you have 16 Bit numbers representing your data then these rounding errors can creep into the audible realm, particularly with very quiet passages of music. A 32 Bit floating point format allows mathematical operations to be performed on audio without rounding errors becoming audible. Before you ask, no, 64 Bit is not subjectively better. Yes there are a few exceptional circumstances that can be concocted to create audible artifacts in a 32 Bit floating point format, but the same is true of 64 Bit float and these cases are not worth considering as a driving factor in perception of 'quality'.

- Your audio device or operating system mixer & Media player - Make sure you don't have any EAX, Compressors or EQ settings etc., on in the audio device or Windows settings. Dig deep here, sometimes they are well hidden in 'advanced' options tabs etc. One trap we sometimes see is people comparing two DAWs that are using different audio device drivers. One DAW may be using ASIO drivers while the other is using the Windows DirectSound driver for example. The settings associated with the driver can have massive effects on the sound you hear.

- You have influenced you - You can't make an unbiased comparison of the audio from two sources, A and B, if you know what source you are listening to at any given time. You can't so forget it,

perceptual psychologists realized this over 100 years ago and developed many useful methodologies to work around it. In particular the 'blind listening' experiment with

an 'objective response indicator'. An objective response indicator is one where there is a right or wrong answer. For example, is this sample A or sample B (not which sounds better, that's subjective). Get a friend to play you the two sources in

random-order pairs. Your task is to simply identify source A and B, nothing more, nothing less. If you can distinguish source A vs B, at least 8 times, or more,

from 10 random-order paired comparisons, then you may be able to hear something. If not, it is likely that you are just guessing. This is probably one of the most enlightening tests any audio-engineer can do, you will learn a lot about perception and your ability to hear things this way. Can you really trust your ears? Watch this.

Invariably we are much less sensitive than we think and perception is far more complex than it seems. If your sense of infallibility in your own perception remains intact, we have an exercise for you:

An experiment - Render the same ~5 seconds of a project to a 320 kbps mp3 file and 16 Bit 44.1 kHz wav (CD format), then make 30 A vs B blind comparisons. This means you are not to know whether your helper is playing the mp3 or wav file to you. You should also avoid eye contact with them and receive no feedback on how well you are doing until the experiment is completed. The helper should write down a list of 30 comparisons randomizing the order to play them to you (wav vs mp3 or mp3 vs wav), they should ensure that you receive 15 mp3 vs wav and 15 wav vs mp3 trials, 30 in total in a mixed up sequence. You don't need to do them all at once, if you need a break do so, but don't confer with your helper about how you are doing. Your task is simply to identify the wav file (the better sounding one!). In order to convince a scientist, with a high degree of confidence you can tell them apart, you need to identify the wav file at least 20 times out of 30. Our untrained monkey fresh from his 24 Bit 192 kHz experiment will correct identify the wav file 15 times (chance). Surely, as mp3 is inferior to CD format, you can beat 20 correct identifications or at least the untrained monkey?

Conclusions

First, all major DAW applications can process audio without introducing unwanted distortions, frequency response alterations or any other unwanted effect that would be 'clearly audible' so as to sway opinion. If you do hear something obvious, then look for the obvious causes (numbered above).

Second, we are not saying that high quality analog gear, microphones, mic preamps and even 24 bit recording don't have a place or do not matter. These things definitely have a place in the production phase. What we are saying is the influence of the DAW in processing this information AND digital distribution formats greater than 16 bit @ 44.1 kHz have been vastly over-exaggerated in the minds of the music producing set. What matters most is mixing skill & performance. Both these 'essential elements' come from humans not the technology.

Some time in the late 1990's we moved beyond the point where technical improvements to 'fidelity' ceased to have any meaningful impact on the audible quality of the music being produced. Further, with the Loudness Wars of the 2000's and widespread adoption of low bit-rate mp3's as a music distribution standard, it's clear that audio quality has been going backwards for a while now, but people are still enjoying their music. In conclusion, we'd like to leave you with a quote from photographer Vernon Trent -

"Amateurs worry about equipment, professionals worry about money, masters worry about light. I just take pictures"

References

1. Meyer, E. Brad and David R. Moran. Audibility of a CD-Standard A/D/A Loop Inserted into a High-Resolution Audio Playback, Journal of the Audio Engineering Society, Sept. 2007, pp. 775-779. This reference is discussed in the article The Emperor's New Sampling Rate

Further reading & videos

- If you own FL Studio, and so have access to the Image-Line forums, we encourage you to look at the links posted in this Audio Quality / Audio Engine thread. Every platform gets the attacks - "Platform ABC is audibly superior to XYZ!". If it were true that one platform was audibly superior to the others, you wouldn't see such a wide distribution of platforms targeted as bad or poor in comparison to others. Note also how convinced the antagonists are there's a problem and how clearly they can hear it. They are convinced because they did hear it, but what they heard was something from the above list.

- The Science of Sample Rates (When Higher Is Better - And When It Isn't) - by Justin Coletti

- 24/192 Music Downloads...and why they make no sense - Xiph develop open source multimedia codecs including Ogg Vorbis (an mp3 alternative).

- Digital show and tell video - Monty at Xiph is back with well thought out and explained, real-time demonstrations of sampling, quantization, bit-depth, and dither on real audio equipment using both modern digital analysis and vintage analog bench equipment.

- Lavry Engineering, manufacturer of high-end A/D and D/A converter technologies explain, according to sampling theory - Why 44.1 kHz is enough and 192 kHz can actually be bad (PDF).

- Another great thread is this one at 'Gearslutz' where Paul Frindle (equipment designer) answers audio myths from the cloud. Paul has 35 years' experience in the pro audio and music industries. He has worked as a studio engineer in Oxford and Paris, and was a design engineer at SSL with responsibilities for E and G-series analogue consoles, emerging assignable consoles and nascent digital audio products. As one of the original team that became Sony Oxford, he is responsible for many revolutionary aspects of the Sony OXF-R3 mixing console. More recently he was responsible for product design and quality assurance at Oxford Plugins. On leaving Sony Oxford, he co-founded Pro Audio DSP in order to make novel sound-processing applications to fulfill many issues he had identified in the audio production chain over his career.

- Finally, see the Image-Line Audio Quality video Playlist at YouTube.

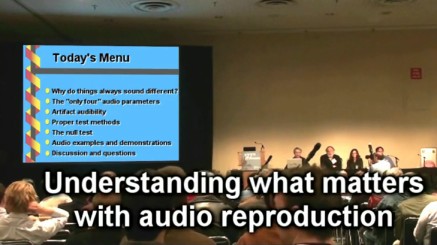

Audio Myths Workshop, Audio Engineering Society 2009

When you have an hour to spare one night, here's a video well worth watching, Ethan & co-presenters cover many of the issues discussed above, including placebo effects in audio, loudness vs quality, 'scam' equipment, dithering, expensive vs cheap audio devices and more...

Ethan Winer's, YouTube video, Audio Myths Workshop AES 2009. You can learn more about the AES on their website.